How Claude Entered Government Systems

Artificial intelligence is quickly becoming embedded in national security operations, raising a critical question for frontier AI companies: how much control should developers retain over how their models are used once they are deployed by government agencies?

That question is now at the center of a growing debate between Anthropic and parts of the U.S. government over the permissible use of Anthropic’s Claude models in military and intelligence contexts. More broadly, the dispute reflects the tension between two priorities that are often aligned in theory but harder to reconcile in practice: adopting powerful commercial AI systems for defense workflows while maintaining meaningful safety and ethical guardrails.

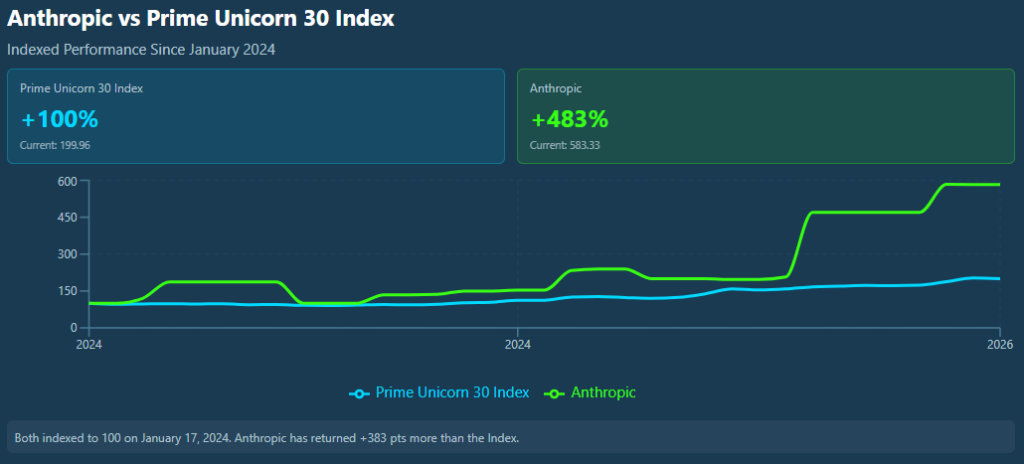

Anthropic, currently the fourth-largest component in the Prime Unicorn Index, has built its reputation around “constitutional AI,” a framework designed to keep models aligned with explicit safety principles and usage boundaries. As governments increase their use of generative AI for sensitive work, those boundaries are being tested in operational settings.

How Claude Entered Government Systems

Anthropic’s government footprint has expanded as agencies explored generative AI for intelligence analysis, cybersecurity, and planning. In late 2024, Anthropic partnered with Palantir Technologies and Amazon Web Services to make Claude available in secure government environments. The collaboration was positioned as a way for federal users to access frontier models through infrastructure designed for government security requirements, while still preserving Anthropic’s safety architecture.

The partnership was a key step in bringing commercial-grade AI into national security systems. It also reflects a broader trend: instead of building every tool internally, the government is increasingly integrating private-sector AI models into its workflows, especially where speed of analysis and decision support matter.

What Claude Is Being Used For

Anthropic has said Claude is being used across multiple government agencies for national security-related work. At a high level, those uses include intelligence analysis, operational planning support, cybersecurity investigations, and simulation or modeling tasks. In practice, generative AI can help analysts summarize large volumes of information, detect anomalies or patterns across datasets, and produce structured outputs that humans can review and refine.

For organizations working with massive flows of information, ranging from reports and communications to imagery and technical data, AI tools can reduce the time required to triage, synthesize, and brief.

The Current Dispute

The core tension is over the terms and guardrails governing how Claude can be used in military contexts. Anthropic has emphasized safeguards intended to prevent misuse or harmful applications and to keep deployment consistent with its safety principles.

Some U.S. defense officials, however, have raised concerns that contractual or technical restrictions attached to commercial AI systems could create operational risk if the tools become embedded in mission-critical workflows. According to Reuters, a senior Pentagon official warned that restrictions tied to AI tools could potentially threaten missions if they limit functionality at key moments.

Altman and OpenAI Move to Fill the Gap

Another reason this dispute has drawn attention is the speed with which OpenAI moved to fill the gap. The Wall Street Journal reported that, following the government’s move away from Anthropic, OpenAI quickly positioned its models for defense use, framing its approach as compatible with national security requirements while still maintaining certain prohibitions (e.g., domestic surveillance and autonomous offensive weapons). In effect, OpenAI’s rapid engagement highlighted how competitive the frontier-model landscape has become for government contracts, and how quickly agencies may pivot between vendors when policy terms become contentious.

At its simplest, the dispute raises a foundational governance question for defense AI adoption: who ultimately controls operational use, AI developers or the government agencies that procure and deploy the systems?

Why It Matters

This disagreement is larger than one company. As governments adopt frontier generative AI more broadly, similar issues are likely to recur across vendors: how to balance safety constraints with reliability, flexibility, and mission requirements. The outcome could shape how future government AI contracts are structured, especially around usage terms, accountability, and operational control, and may become a defining issue for how frontier AI companies participate in national security markets.

Anthropic’s rapid rise highlights its growing importance within the Prime Unicorn Index. Since January 2024, Anthropic has significantly outperformed the broader PUI benchmark, underscoring how frontier AI companies are becoming major drivers of index performance.